Back to part one: The domestication of humans

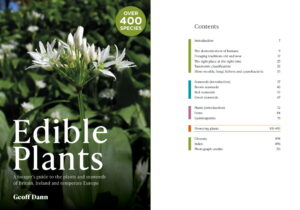

This material was originally published as chapter 2 of my book Edible Plants: a forager’s guide to the plants and seaweeds of Britain, Ireland and temperate Europe. Signed copies available here, also available from Amazon and other online retailers.

This material was originally published as chapter 2 of my book Edible Plants: a forager’s guide to the plants and seaweeds of Britain, Ireland and temperate Europe. Signed copies available here, also available from Amazon and other online retailers.

Old traditions

Humans continued to depend on foraged food long after the development of farming. For the rich, wild food primarily meant hunting game, along with a few prized fungi. For everybody else, but especially the rural poor, foraged plants and seaweeds provided an essential backup for times when access to cultivated food was compromised by famine or conflict. Another element of the old tradition was the appeal of diversification even in non-famine times. In poor rural communities the cultivated food was monotonous, and since a lot of wild food is strongly favoured, it could, with the help of some creative cookery, be used to liven up an otherwise dull diet. It also increased access to vitamins and minerals, but they probably didn’t know that.

By the start of the 20th century, these traditions and the associated knowledge had almost died out in north-west Europe, and were heading that way in the south and east too. A 2012 scientific paper called Wild food plant use in the 21st century: the disappearance of old traditions and the search for new cuisines involving wild edibles (Łuczaj, Pieroni, Tardío, Pardo de Santayana, Manuel, Sõukand, Svanberg, Ingvar, Kalle, Raivo, Vol 81 of Societatis Botanicorum Poloniae) highlighted the waning of the main motive for keeping the old foraging traditions alive: fear of famine. In 19th century Europe, famine had still been a clear and present danger. Though the potato blight of 1844-49 is most frequently associated with Ireland, it affected much of northern Europe and was the most recent serious European food crisis caused by something other than conflict. As memories of famine began to fade, so did folk knowledge of edible wild plants, and by 2012 only the elders of a few rural communities still retained any at all. Among this oldest generation, knowledge survived of two main categories of emergency food: things that could be used to bulk out flour as grain supplies dwindled, and pot-herbs. A tradition survives in some places, particularly in southern Europe, of creating dishes containing a blend of an enormous variety of wild plants (40, or perhaps even 50). Ethnobotanist Timothy Johns has theorised that this behaviour was directly linked to the need to preserve knowledge that might prove critical in times of scarcity – even though some plants in the blend were inferior, knowledge of their edibility might one day save lives. Some of these multi-species wild dishes have even evolved into local, modern culinary specialities like Frankfurter green sauce, Easter ledger pudding and the Italian wild green salad misticanza. Perhaps the best example is from Dalmatia in Croatia. Mišanca isn’t a dish, or even a specific blend of wild plants, though it always includes an Allium such as wild onion. As with misticanza, the name means ‘mixture’, and traditionally it included upwards of 20 wild species, including grasses and flowers. It is used in many different dishes, and selecting the perfect mixture for a specific use has become the trademark of a high quality chef in the region.

Waning fear of famine wasn’t the sole reason for the decline of old traditions. Ecological changes also altered the availability of many types of wild plant, mostly due to an increase in intensive industrial farming methods. More livestock, especially cattle, means more animal waste finding its way into watercourses, and different plants growing there, as well as making the foraging of aquatic plants less attractive and less safe. Modern arable farming has had an even greater effect on wild species. Quite a few traditionally foraged plants are arable weeds that were once manually harvested while the main crop was brought in, but have become rare as a result of the large-scale spraying of herbicides.

Books

The old foraging traditions were oral, passed down directly from one generation to the next, most frequently by women. Before the 20th century, there were very few books whose main subject was foraging for wild food. There were, however, plenty of books about the alleged medicinal properties of plants, both wild and cultivated, some of which included information about food and other uses.

William Turner began his career as a naturalist. He studied at Cambridge University and his ground-breaking 1538 book Libellus de re Herbaria novus (‘New book about plants’) was the result a great deal of time spent seeking and studying plants in their natural habitat. He went on to study medicine in Italy, and his A new herball (published in three parts between 1551 and 1568) was the first book written in English to describe the “uses and vertues” of wild European plants.

John Gerard was a botanist and horticulturalist. His Herball, or Generall Historie of Plantes, (1597) is both more famous and more controversial than Turner’s book. Authoritative writing about wild plants demands that you actually find the plants in question, but your chances of finding all the plants you would like to write about is remote, even if you spend many years searching far and wide. Gerard travelled very little (though he claimed otherwise). Instead, he created a herbal garden, in which he grew interesting plants sent to him as seeds, including many that weren’t native to the British Isles. This allowed at least some original research on new plants, and provided the opportunity to check for himself some of their alleged virtues. He also indulged in quite a lot of plagiarism of the work of Flemish herbalists Rembert Dodoens and Matthias de Lobel, and French horticulturalist Charles de l’Écluse. His book re-used 1,800 botanical woodcuts from other sources (re-using Dodoens’ woodcuts would have given the game away). Though hugely successful, Gerard’s Herball eventually became infamous for its mistakes – some inherited from the works he copied, some the result of borrowed text being wrongly matched with borrowed illustrations, and one a case of wrongly believing he’d seen evidence of a tree that bears geese as fruit.

Two other books deserve a mention here. Botanist and horticulturalist John Parkinson wrote the largest book in this genre ever published (Theatrum Botanicum, 1640), covering 3,800 plants, including kitchen garden plants and orchard trees. Nicholas Culpeper was a pharmacist and astrologer, and his 1652 book The English Physitian (1652) (later re-titled The Complete Herbal), was a bestseller, not least because of its anti-establishment humour and accessibility for the common people. It was written in ordinary language, and sold at a much more affordable price than any of its rival publications.

The works of Gerard and of Culpeper remained in use for the next two centuries. It wasn’t until 1868 that a recognisably modern book appeared on the uses of British wild plants: The Useful Plants of Great Britain by C. Pierpoint Johnson. Along with the medical and veterinary information, the book describes many other uses of wild plants – as sources of dyes, textiles and wood for woodworking and fuel, and providing fodder for animals and food for people. It also included a few seaweeds and fungi, both of which were classed as plants at the time. The book you are reading [Edible Plants, see link above] also contains some quotes from British Poisonous Plants (1856), which was written by another Johnson (Charles), not to be confused with C. Pierpoint. Notable books purely about edible and poisonous fungi were published in 1886 (William Delisle Hay’s Elementary Text-book of British Fungi) and 1891 (M.C. Cooke’s British Edible Fungi).

These books did not ignite a popular foraging revival. The zeitgeist of the late Victorian era was of progress and prosperous technological modernity, not the preservation of apparently obsolete folk knowledge. It was the zenith of the British Empire, with all manner of exotic foreign foods on offer, at least for those who could afford them, so there was little motivation for rediscovering the joys of foraging for native wild species. For those without wealth, there was no time for such hobbies, or no access to the countryside. Though a low point for wild food, this was a golden age for British cookery. An eight-volume behemoth published in 1892 – The Encyclopedia of Practical Cookery: A Complete Dictionary of all Pertaining to the Art of Cookery and Table Service edited by Theodore Francis Garrett – contains entries for obscure edible plant products from distant corners of the empire, but mentions only a tiny fraction of the wild European species in this book. Not even Ramsons made the cut. It does, however, include 68 recipes for chestnuts and ten for dandelion, and quite a few that made into other parts of this book.

This period of confident abundance came to an abrupt end in 1914. The First World War prompted increased British interest in edible/useful wild plants, especially those of medicinal relevance. German pharmaceutical companies had been very dominant in the period before the conflict, so this was a necessity (see: Britain’s Green Allies: Medicinal Plants in Wartime by Peter Ayres, 2015). In addition to the medicinal plants, horse chestnuts were collected to make soap and sphagnum moss for dressing wounds. Books specifically about wild food made their first appearance shortly after. In the preface of The Wild Foods of Great Britain (1917) LCR Cameron wrote: “The incidence of war has bought home to its inhabitants that an island like Britain is not self supporting, and that scarcity, if not actual want of food is daily becoming more possible…” Cameron’s 260 kinds of wild food included hedgehogs and frogs (both now protected), but the section on plants is disappointingly small.

Between the wars Florence White, who had founded the English Folk Cookery Association in 1928, wrote two relevant books: a book of traditional recipes called Good Things in England (1932), which remains in print, and Flowers as Food (1934). Then during the Second World War things got more serious again. In a 1939 book also called Wild Foods of Britain, Jason Hill called for people to “reinforce the national larder”. The collection of one particular wild food was even organised by the government: rosehips, which provide the highest concentration of vitamin C of any European wild food, replacing the lost supplies of citrus fruits. The pamphlet on rosehips generated a great deal of interest, and its author – nutritionist Claire Loewenfeld – received thousands of letters requesting information about other types of wild food. Six more pamphlets followed under the name Britain’s Wild Larder.

When the war was over, Loewenfeld began work on a series of books under the same name, to make available the wild food folk knowledge uncovered by wartime research. The word ‘larder’ (even after ‘wild’) conjures visions of domesticity, and these books have a different tone to the foraging guides that came after. There is no romanticising of hunter-gathering and no mention of high cuisine, just practical information about the potential role of wild foods in hard times. The introduction starts with “The world is apprehensive about food shortages”, and goes on to warn how overpopulation, soil depletion and potential disruption to our food distribution systems “naturally cast a dark shadow on our future without taking further account the prospects of shortage caused by possible war”. The recipe selection was influenced by the limitations of a decade of post-war austerity and the rationing. In the end only the first two books were published, on Fungi (1956) and Nuts (1957). Perhaps by that time people had had their fill of scarcity and were beginning to feel more positive about the future.

New traditions

The wild food culture of 21st century Europe can trace its roots to the hippy movement of the late 1960s and early 1970s, and the iconic Food for Free by Richard Mabey (first published in 1972). That the food was free wasn’t really the point. For some people it was a move towards healthier, more ethical food: wild is as organic as it gets, and most wild foods are the diametric opposite of the carbohydrate-rich, fibre-poor, highly-processed diet of many modern westerners. For others it was a way to bypass the system and search for their inner hunter-gatherer (Mabey described it as “a poke in the eye for domesticity”). But very few pioneers of the new tradition had genuine concerns about their own food security or that of the western world in general. When Cameron’s 1917 book was republished in 1977 the original preface about food scarcity wasn’t included, and the new preface was all about increasing the variety of fresh and unpackaged food in our diet. And when a single volume version of Britain’s Wild Larder came out in 1980, having been updated by Patience Bosanquet six years after the author’s death, all talk of food shortages had been replaced with “interest in the countryside”, “fresh air and exercise” and “trying new flavours in our cookery”.

By the end of the 1990s a radical new sort of high cuisine was emerging – wild food offers new avenues for culinary adventurers to explore. Probably the most famous restaurant in northern Europe is René Redzepi’s Noma in Copenhagen, which specialises in re-inventing Nordic cuisine and continually uses foraged food in inventive ways. It is the Neolithic situation reversed: the wild food has become exotic and high status, and the farmed fare taken for granted.

Also part of the new culture are the the tales of miraculous escapes from emergency situations, the military-style survivalism of Bear Grylls, and reality TV shows where people compete to survive on remote, uninhabited islands. While these are examples of foraging as a response to hunger, they have little to do with the everyday lives of ordinary people; most of us don’t anticipate ending up in situations like these.

For half a century, the foraging culture in the western world has been more of a break with the past than a continuation of older traditions. (This is true not just in the British Isles but also other parts of Europe, such as the Baltic states, where we might assume the culture was more continuous (for example, see: Changes in the Use of Wild Food Plants in Estonia: 18th – 21st Century, Renata Sõukand and Raivo Kalle, (2016))).

The future

The search for new cuisines goes on, social media having turned it into a giant collective effort, as chefs and enthusiasts all around the world share their latest wild creations. But what about the longer term future?

Rewilding and regrounding

The term ‘rewilding’ is usually applied by conservationists to places. It is a process whereby the wild world is allowed to reclaim an area, sometimes helped by the intentional re-introduction of lost species. The term has also been applied by anthropologists and philosophers to people: learning how to forage for wild food is a way to undo some of our domestication. The mental health crisis isn’t going away, and spending time outdoors engaging with the wild world as nature intended is seen as a means of psychological ‘regrounding’. It is a way to help cope with the cognitive dissonance caused by knowing resources are finite while you’re trapped in a world of perpetual overconsumption watching the capitalist machine banging the drum of ‘progress’.

There is a growing trend for doing this with children as well as adults tired of the rat-race. Teach them in the woods, where it is wilder and muddier, rather than in the sanitised indoor world and the artificial environment of playgrounds. Maybe sow a few mental seeds and hope the next generation is slightly less messed up than we are.

Some anarcho-primitivists try take it much further, believing the solution to our problems is a post-civilisation hunter-gatherer ‘an-prim society’, free from super-tribal power structures. But how could we possibly get from here to there? Wild food, on its own, never supported more than fifty million people globally and there are eight billion of us now. The holocene ecosystem is gone, the sixth mass extinction is already underway, and climate change looks unstoppable. Appealing though it may be to some romantics and revolutionaries, going backwards isn’t an option. Human rewilding can only be for individuals or small groups, and for most of them only temporarily. As a society, we have to find a way forwards from where we are now.

Collapse

Anarcho-primitivists aren’t the only people anticipating a post-industrial-agricultural world. There is a growing fear that civilisation as we know it is already in the early stage of collapse. There is no sign of political or economic change on the scale that would be needed to save it, either globally or nationally. The UK is no nearer to food self-sufficiency than it was when rationing ended in 1954, but most people don’t even consider this to be a problem. After all, we’ve been ignoring warnings about overpopulation and food crises since Thomas Malthus wrote about them in 1798. Why listen now?

The past isn’t a reliable guide to the future. In the spring of 2020, for the first time in the supermarket age, westerners got a shocking taste of empty shelves. In the UK it took just a few days to go from relative normality to full-scale panic, and a wide range of products became scarce, including long-life dried and canned food. The Covid-19 pandemic exposed the fragility of our supply chains. As the future becomes increasingly uncertain, alternatives to our regular food sources will become more important, especially in shorter-term emergencies.

Unfortunately, in the longer term our wild larder can never replace the vast amount of food we import; there are far too many people and nothing like enough remains of the wild world. If things get tough, the most attractive and less abundant wild foods will quickly be foraged into oblivion, and this worsens as the ratio of human population to available area for foraging increases. This problem was identified as serious in Epping Forest (right next to London) several years ago, and that was without food supply concerns. The response was a total fungi foraging ban that may be a sign of things to come: a similar situation already exists on some parts of the British coast with some seaweeds. It is hard to imagine running out of dandelions, blackberries or wracks, and not many people will be bothered if entire stands of non-native species like alexanders are taken for food, but wild food on its own won’t be enough. If we want to avoid total collapse while there’s still something worth saving and finally build a sustainable society, then a more permanent solution will be needed.

Permaculture?

Is a sustainable post-industrial agricultural society possible? This was another question being asked in the late 1960s, and the answer that emerged in the early 1970s, along with the fledgling environmental movement and new foraging culture, was permaculture. It is a philosophy encompassing food production and ecology, with a goal of establishing a new balance between humans and the ecosystems we inhabit: “The conscious design and maintenance of agriculturally productive ecosystems which have the diversity, stability, and resilience of natural ecosystems.” The name means ‘permanent agriculture’.

Permaculture and anarcho-primitivism have some important similarities. Both view conventional agriculture as harmful, and seek a new food culture that works in harmony with nature instead of attempting to dominate it. The godfather of permaculture – Bill Mollison – drew deeply on indigenous practices, rather than inventing something entirely new. Permaculture does away with neat domestic vegetable beds and industrial crop monocultures. It creates wild-looking spaces with their own mini-ecosystems designed to produce ‘abundance’, which is then harvested in a more foraging-like way than traditional crops. A lot of the initial work involves observing the land and then working with it, rather than imposing upon it. Permaculture is a whole system, from sacrificial crops to keep the birds happy, who will also eat the pests, to companion plants that bring up nutrients from deep underground or suck nitrogen from the air, to ducks to eat slugs and chickens to eat insect grubs, both providing manure. If you are going to plant a boundary hedge, why not make it an edible hedge, so it also provides food on both sides? Future foragers will be very grateful.

Permaculture also reaches beyond food production. The intention is to replace conventional agriculture, not merely as a set of procedures, but as a way of life, just as agriculture replaced hunter-gathering. For this to work, the worst mistakes of the past must not be repeated. The three equal foundational ethics of permaculture, as originally proposed by Mollison, were Care of the Earth, Care of people and Setting limits to population and consumption. The first two are relatively unproblematic, but the third has faced stiff resistance from people who want to replace it with principles demanding fair shares or redistribution or surpluses, or hidden and watered down in a principle about ‘future care’. Fairness is a worthy goal, but will become increasingly unachievable, especially on a global scale, in the context of a deglobalising and collapsing techno-industrial civilisation. Physical limits are an inescapable feature of world we live in, and our politics must adapt to them, not the other way around. The unconditional acceptance of limits to growth is necessary as a foundational principle for any sustainable system operating in a finite physical space, be it agricultural, social, political, economic or all of these things. Either we choose to set, and abide by, realistic limits to the human operation on Earth, or the rest of the ecosystem will find ways to impose limits whether humans like it or not. The former is prerequisite for the “conscious design and maintenance of of agriculturally productive ecosystems” – it is permaculture on the grandest scale, and our only realistic hope for a Good Anthropocene. The latter entails the unconscious evolution of an ecosystem under intense selective pressure to counterbalance human dominance: a Bad Anthropocene.